Summary

- Market Growth: The global EDA market is projected to expand significantly, driven by the demand for complex ICs in AI, automotive, and 5G sectors.

- Core Function: Electronic design automation (EDA) replaces manual circuit design with sophisticated software for simulation, verification, and manufacturing preparation.

- Workflow Essentials: The process moves from logical design and synthesis to physical layout and rigorous verification (DRC/LVS) before fabrication.

- Manufacturing Impact: EDA tools are critical for fabs and foundries to ensure high yield, reduce “spin” costs, and maintain compatibility with advanced packaging.

- Future Outlook: AI integration and cloud-based EDA are the next frontier, optimising power, performance, and area (PPA) faster than humanly possible.

Introduction

The complexity of modern microchips is difficult to overstate. A single NVIDIA H100 GPU contains 80 billion transistors. If a human engineer attempted to lay out those transistors manually at a rate of one per second, finishing the design would take over 2,500 years. This impossibility is where electronic design automation steps in. According to Grand View Research (2023), the global EDA market size was valued at USD 11.10 billion in 2022 and is expected to grow at a compound annual growth rate (CAGR) of 9.1% from 2023 to 2030.

EDA is the backbone of the semiconductor industry. It is a category of software tools used to design electronic systems such as integrated circuits (ICs) and printed circuit boards (PCBs). For semiconductor fabs, foundries, and equipment manufacturers, these tools bridge the gap between a theoretical concept and a physical wafer. Without them, the Industry 4.0 advancements we see in smart factories today would halt immediately.

In this guide, we break down what electronic design automation is, how the workflow functions, and why the future of electronic design automation relies heavily on artificial intelligence and cloud computing.

What Is Electronic Design Automation?

At its core, electronic design automation (EDA) is the software toolchain that enables chip designers to define, plan, design, implement, verify, and manufacture semiconductor devices. Before EDA became standard in the 1980s, integrated circuits were often designed by hand using geometric tapes and manual layout techniques. As Moore’s Law took effect and transistor counts skyrocketed, manual methods became obsolete.

Today, EDA encompasses a massive ecosystem. It covers everything from writing code in Verilog or VHDL to running thermal simulations and finalising the GDSII file sent to the foundry. For a semiconductor automation buyer or a process engineer in a fab, understanding EDA is vital because the output of these tools dictates the manufacturing recipe.

The Nuts and Bolts: How the EDA Workflow Functions

The EDA workflow is a funnel. It starts with abstract logic and ends with a physical blueprint. While every project differs, the standard path involves three main phases.

Design Entry and Simulation

The process begins with the specification. Designers describe the behavior of the chip using a Hardware Description Language (HDL). They do not draw gates yet; they write code.

- Behavioral Simulation: Engineers test this code to ensure the logic holds up. If the logic says “1 + 1 = 2,” the simulator confirms the output is actually 2.

- Synthesis: This step translates the abstract code into a gate-level netlist. It converts high-level instructions into actual logic gates (AND, OR, NOT) that can be placed on silicon.

Physical Design (Place and Route)

Once the netlist exists, the chip design software must figure out where to put everything. This is the “Tetris” phase of chip design.

- Floorplanning: Deciding where major blocks (like the CPU core or memory) go on the die.

- Placement and Routing: The software places millions of standard cells and connects them with copper wiring (interconnects). The goal is to minimize wire length and optimize signal speed.

Verification and Sign-off

Before a design goes to the fab, it must be perfect. A mistake here costs millions of dollars in wasted wafers.

- DRC (Design Rule Check): Ensures the layout meets the foundry’s manufacturing constraints (e.g., minimum wire width).

- LVS (Layout vs. Schematic): Verifies that the physical layout matches the original logical design.

- Parasitic Extraction: Simulates how electricity will behave in the real physical wires, accounting for resistance and capacitance.

Why Semiconductor Fabs Rely on EDA

For manufacturing units, assembly plants, and OSATs (Outsourced Semiconductor Assembly and Test), EDA is not merely a design tool. It is a yield-protection mechanism.

Reducing Manufacturing Spins

A “spin” refers to running a design through the manufacturing process. If a defect is found after fabrication, the fab must discard the wafers, fix the design, and spin again. Since a single mask set for a 5nm process can cost millions, fabs use rigorous EDA simulations to ensure “first-time-right” silicon.

Compatibility with Advanced Packaging

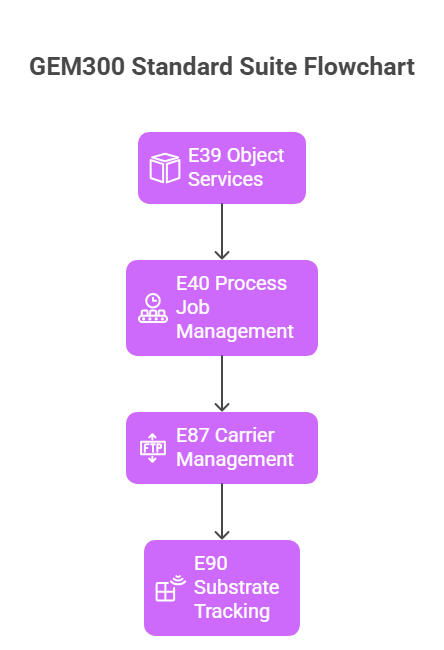

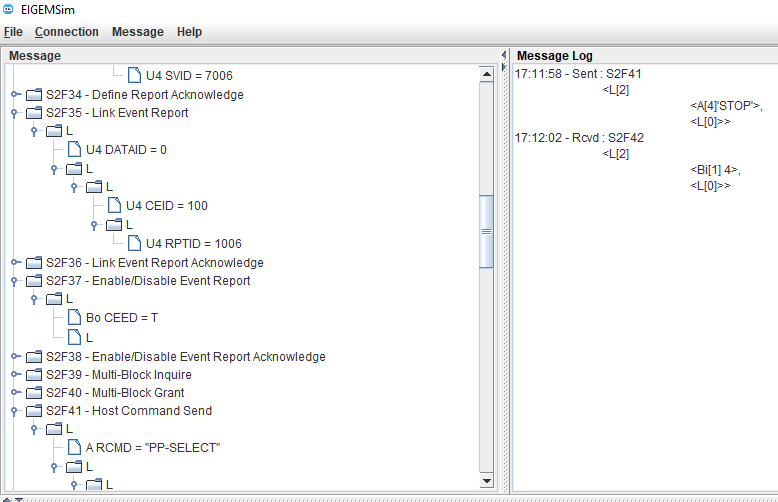

Modern manufacturing involves complex packaging, such as 2.5D and 3D ICs (stacking chips vertically). Electronic design automation tools now integrate with manufacturing equipment data to predict how heat and stress affect these stacked dies during assembly. This connects directly to smart factory initiatives where equipment connectivity (SECS/GEM) feeds data back to design teams to improve yield.

Note: For factories upgrading to GEM300 standards or retrofitting legacy equipment, the link between EDA data and machine performance is becoming a critical data point for MES integration.

Key Tools in the Chip Design Software Arsenal

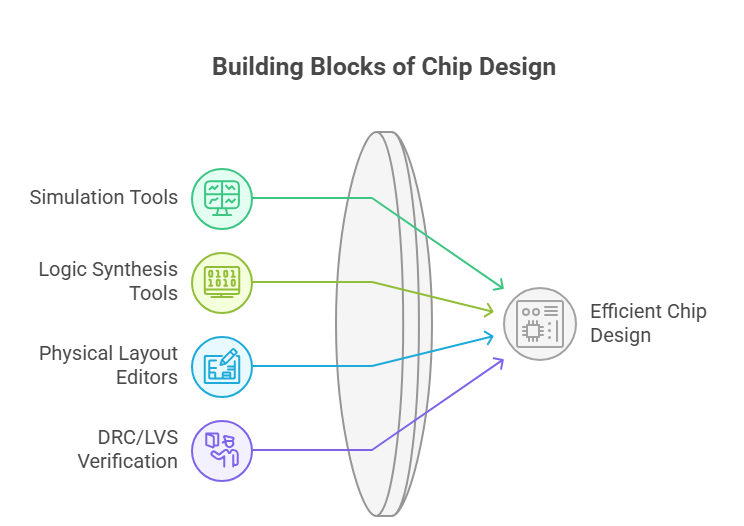

The industry relies on a few heavy hitters for these tasks. While we won’t list every vendor, we can categorize the software types that keep the industry moving.

- Simulation Tools: These predict circuit behavior. SPICE (Simulation Program with Integrated Circuit Emphasis) remains the grandfather of all circuit simulators.

- Logic Synthesis Tools: These compilers turn code into circuits.

- Physical Layout Editors: The CAD tools for drawing the actual polygons on the chip layers.

- DRC/LVS Verification Tools: The final gatekeepers before manufacturing.

The Future of Electronic Design Automation

The future of electronic design automation is shifting away from static software toward intelligent, adaptive systems. The demand for lower power consumption and higher performance drives these trends.

AI and Machine Learning in Design

Designers are now using AI to handle the tedious parts of the EDA workflow. Synopsys and Cadence have introduced AI-driven tools that can explore billions of potential layout options to find the optimal arrangement for power and speed.

So, will AI replace the chip designer? Unlikely. AI acts more like a hyper-efficient assistant, handling the “brute force” work of routing wires so engineers can focus on architecture.

Cloud-Based EDA

Historically, companies ran EDA tools on massive on-premise server farms to protect IP. However, the sheer computing power required for 3nm and 2nm designs is pushing the industry toward the cloud. Cloud-based EDA allows small design houses to access supercomputing power on demand, leveling the playing field.

3D-IC and Chiplet Standards

As Moore’s Law slows down, the industry is moving toward “chiplets,” modular dies connected in a single package.EDA tools are evolving to handle the thermal and electromagnetic challenges of putting multiple active chips right next to each other.

Comparison: Legacy vs. Modern EDA

| Feature | Legacy EDA | Modern AI-Driven EDA |

| Optimization | Manual iteration by engineers | AI explores the design space automatically |

| Compute | On-premise server farms | Hybrid or fully Cloud-native |

| Focus | Single monolithic die | Chiplets and 3D-IC packaging |

| Speed | Weeks for physical closure | Days or hours for physical closure |

Conclusion

Electronic design automation has evolved from a niche drafting aid to the central nervous system of the semiconductor supply chain. For fabs, foundries, and automation engineers, understanding these tools is essential for bridging the gap between digital concepts and tangible silicon. As we move toward 3D-IC structures and AI-integrated manufacturing, the synergy between design software and factory automation will only deepen.

Whether you are retrofitting legacy equipment or building a fully automated smart factory, the data starts with the design.

Get Expert Help to Choose the Right EDA Solutions for Your Projects